Blog

Selling stories

Not that I am particularly experienced with academic life as a first year PhD student, and not that I got a bad impression of science after just a few months as a rookie researcher at the University of Zurich and the Zurich University of Applied Sciences. No, quite the contrary. But according to senior colleagues, friends, the insistent warnings of contributors in scientific journals and their echo in mass media, there are things going wrong with science.

What I want to discuss in this post is the (long running) trend to tell and sell stories in research. What is usually meant by storytelling in the scientific context is the fitting of observations into seemingly reasonable narratives, without rigorously testing all its premises. I extend this interpretation and refer to story selling as the overstretching of arguments, the over-interpretation of data with weak statistical significance, or the overstatement of the relevance of some findings, with the overall goal to impress people.

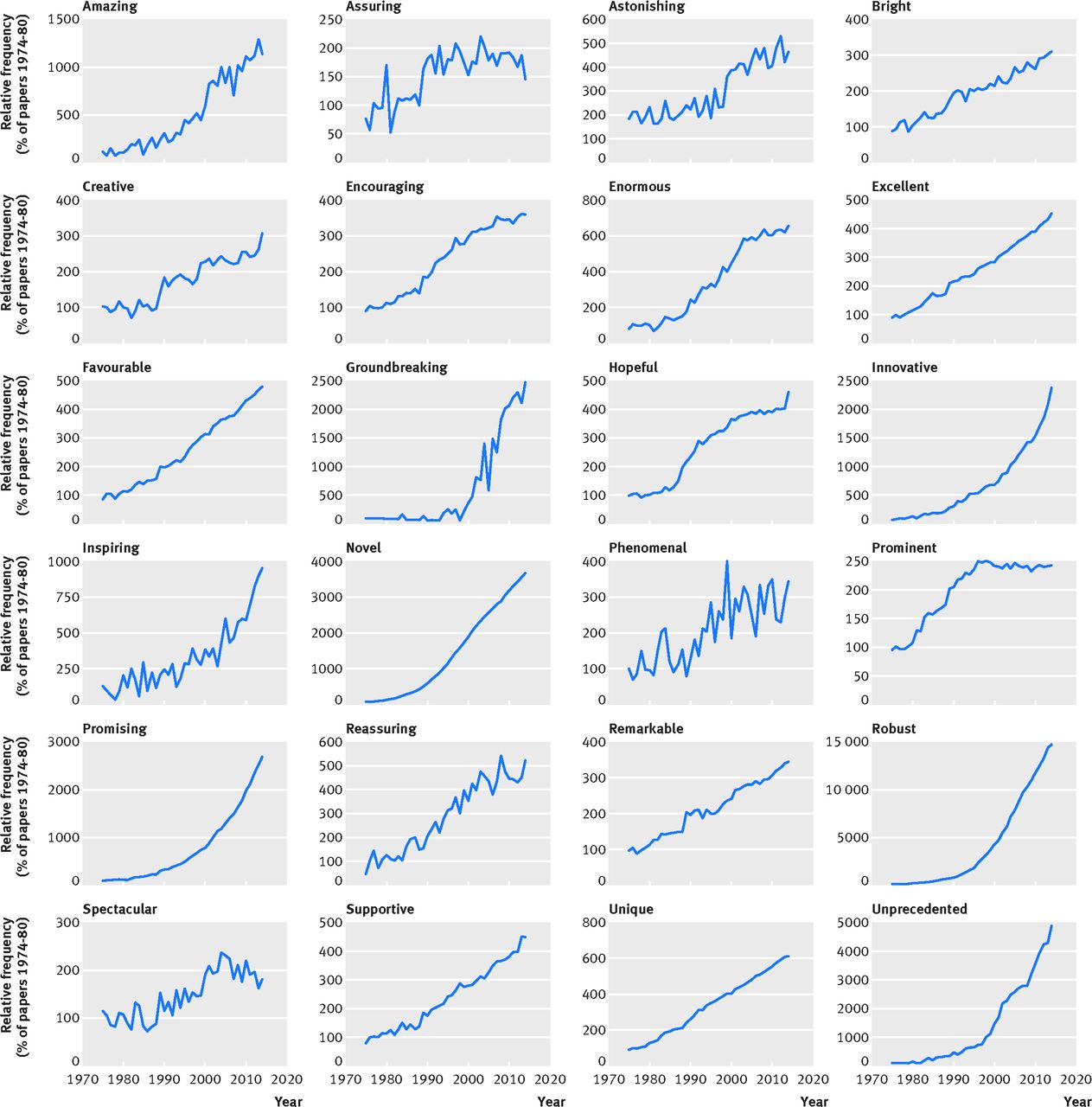

Really, are scientists a horde of show-offs? One could interpret the results published recently by Vinkers et al.1 in this way. That paper reports an increasing use of words with clear positive meaning (like novel, phenomenal, astonishing) in scientific abstracts accessible on PubMed, published between the years 1975 and 2014 (see the figure below). Despite some methodological limitations (no semantic analysis, not taking into account evolutionary effects in scientific writing over four decades), one can easily conceive an image of bragging scientists through those results. The authors distance themselves from such a conclusion and differentiate that scientists, always being under pressure to publish, are forced to use an overly optimistic language to get published at all. Furthermore, they see a link to positive outcome bias, where positive results with a clear interpretation are favored by the scientific community (and not just the authors!) over negative results and results with unclear interpretation.

Figure: Evolution of the frequency with which some positive words occur in abstracts of scientific papers accessible on PubMed between 1975 and 2014, relative to the frequency of these words in the period of 1975-1980. Reproduced from Vinkers et al., BMJ 2015;351:h6467.1

One obvious implication of just seeing a positive selection of observations and arguments is that it makes it harder to judge the validity of statements. Of course, we were taught to read papers critically, always bearing in mind that the author may have used obfuscating language to hide shortcomings of the study. But the problem with improper scientific reporting is the potential cost to the scientific community (think, for example, of all the sleepless nights of desperate doctoral students attempting to make a published protocol work as claimed). The key word in this context is reproducibility of results, which is apparently an issue in at least some disciplines of the life sciences2,3,4 and probably worth another 10 blog posts alone…

To put things into perspective: there are many factors that affect the reproducibility of observations, but proper reporting is central. And story selling leads to distorted reporting. Obviously, it is hard to judge the prevalence of story selling in the scientific community, and I refrain from doing that here. Some may hold the view that story selling comes close to lying and bluffing. There is a fine line between those, indeed. I believe that, while there may be fraudulent intent in some cases, story selling appears to have much rather a psychological (“reward fishing”) and cultural (aversion to accept failure) component.

Some journals and funding institutions have taken measures to counteract this development and promote reproducible research5,6,7. Also, there are several initiatives for Open Science and Open Data with the aim to improve scientific progress by sharing data and method implementations with a broader community, and not just the results.

Just a few weeks ago, another initiative was launched at the University of Zurich: Sciencematters. Its aim is to put emphasis on observations rather than on their wrapping into stories. Or as they put it: “Stories can wait. Science can’t.” Their new publication platform features, among other things, the possibility to publish single observations rather than complete stories, and a triple-blinded peer review system where “editors’, reviewers’ and authors’ identities are unknown to all”.8 Of course, the success of this platform is determined by its contributors, so give it a thought and consider whether you could take part.

1 C. Vinkers et al. Use of positive and negative words in scientific PubMed abstracts between 1974 and 2014: retrospective analysis. 2015. BMJ 2015; 351. Link.

2 C. Begley et al. Drug development: Raise standards for preclinical cancer research. 2012. Nature 483, 531–533. Link.

3 J. Couzin-Frankel. The Power of Negative Thinking. 2013. Article that appeared in Science. Link.

4 C. Chambers. The changing face of psychology. 2014. The Guardian Online article, accessed on 07.03.2015.

5 Nature Editorial, 2016. Repetitive flaws: Strict guidelines to improve the reproducibility of experiments are a welcome move. Online article, accessed on 07.03.2015.

6 Webpage of the Society for Neuroscience. Policy position on Research Practices for Scientific Rigor: A Resource for Discussion, Training, and Practice. Online article puttygen ssh , accessed on 07.03.2015.

7 S. Landis et al. A call for transparent reporting to optimize the predictive value of preclinical research. 2012. Nature 490(7419):187-91. Link.

8 Sciencematters. Editorial: Why publish single observations? Because Science Matters. March 2nd 2016. Online article, accessed on 07.03.2015.